?Mathematical formulae have been encoded as MathML and are displayed in this HTML version using MathJax in order to improve their display. Uncheck the box to turn MathJax off. This feature requires Javascript. Click on a formula to zoom.

?Mathematical formulae have been encoded as MathML and are displayed in this HTML version using MathJax in order to improve their display. Uncheck the box to turn MathJax off. This feature requires Javascript. Click on a formula to zoom.ABSTRACT

Single-arm phase II trials are very common in oncology. A fixed sample trial may lack sufficient power if the true efficacy is less than the assumed one. Adaptive designs have been proposed in the literature. We propose a Simon’s design based, adaptive sequential design. Simon’s design is the most used fixed sample design for single-arm phase II oncology trials. A prominent feature of Simon’s design is that it minimizes the sample size when there is no clinically meaningful efficacy. We identify Simon’s trial as a special group sequential design. Established methods for sample size re-estimation (SSR) can be readily applied to Simon’s design. Simulations show that simply adding SSR to Simon’s design may still not provide desirable power. We propose some expansions to Simon’s design. The expanded design with SSR can provide even more power.

1. Introduction

In phase II single-arm trials, the primary endpoint is often a binary response rate (e.g., radiological response, tumor response rate, or objective response rate (ORR) (U.S. Food and Drug Administration, Citation2018). The null hypothesis is that the response rate does not exceed some

, which is a response rate indicating low activity with insufficient clinical benefit. The alternative hypothesis is that the response rate is larger than

. Clinical considerations may suggest some

(which is larger than

) such that any response rate no less than

will be clinically meaningful. The response rate

will depend on the cancer type and available treatment options. For some recalcitrant cancer in patients with some specific genes with limited treatment options, even incremental efficacy (e.g., such as

can be clinically meaningful. With over 3500 citations, Simon’s two-stage design Simon (Citation1989) is the most used design for single-arm phase II oncology trials. Simon’s designs are fixed sample designs. A fixed sample trial designed to provide

power for

will also provide at least

power if the true response rate is larger. This is to say that there is a fixed sample size that can provide adequate power for a range of possible response rates. However, such a trial assumes the lowest clinically meaningful response rate and is the largest trial of all possibilities. The large sample size with this design is unnecessary if the true response rate is higher than

. Oncology trials are usually very expensive and time consuming, trials with potentially unnecessarily large sample sizes are often undesirable. An ideal sample size is such that it’s “just about right”, but determining accurate sample size requires precise knowledge about the true response rate which is often unknown. Usually, a target response rate

is assumed (which may not be accurate for various reasons) for power calculation. However, the trial will be underpowered if the true response rate

is smaller than the assumed

. Similar concerns about underpowered trial designs are also common in placebo-controlled studies and have motivated extensive research on sample size re-estimation (SSR), and such methods have been widely used (e.g., U.S Food and Drug Administration (CDER and CBER) (Citation2019)) to provide flexibility in trial design. There are mainly two categories of such methods: combination tests/conditional error functions (e.g., Bauer and Kohne (Citation1994); Proschan and Hunsberger (Citation1995)), and adaptive sequential designs (ASD) (e.g., Cui et al. (Citation1999); Müller and Schäfer (Citation2001); Jennison and Turnbull (Citation2003, Citation2006); Chen et al. (Citation2004); Gao et al. (Citation2008). Conditional error function-based methods for SSR have been proposed for phase II single-arm studies (e.g., Englert and Kieser Citation2012; Englert and S Citation2013; Kunzmann and Kieser Citation2016). In this article, we propose an ASD that can be used for single-arm trials. We show that Simon’s design is a special kind of group sequential design, and thus can be naturally combined with SSR methods from ASD Gao et al. (Citation2008), and Gao et al. (Citation2013). However, we also show through simulations that simply adding SSR to Simon’s design may not sufficiently increase the power. For this reason, we first augment and expand Simon’s design, all following Simon’s designing considerations. Then, we combine the expansions with SSR, which provides more power than Simon’s design combined with SSR. The method is an ASD and retains the basic structure and considerations of Simon’s design. Our design is mathematically similar to the sequential design by Chang et al. (Citation1987), but our constraint on sample size is more aligned with that of Simon (Citation1989). Like other two-stage designs Simon (Citation1989); Jung et al. (Citation2004); Lin and Shih (Citation2004); Englert and Kieser (Citation2012); Englert and S (Citation2013), our method includes a first stage in which a futility analysis is conducted, and the trial is terminated if the efficacy does not reach a clinical meaningful threshold. If the trial is not stopped for futility, further patients are then enrolled to assess efficacy in the second stage. Our method also includes an option for three-stage design which is statistically more efficient. One feature of ASD-based SSR (used in our proposal) is that exact inference Gao et al. (Citation2013) is available, which includes median unbiased estimate for efficacy, and two-sided exact confidence interval. Further, through simulations, we show that even with adaptive design, satisfactory operating characteristics (OC) (e.g., power and sample size) are not automatic. We provide several design options for the users to explore, compare, and choose from. We recommend that simulations be conducted to investigate the OCs of designs under consideration such that designs with desirable OCs can be selected. The proposed method and simulations are supported by the software Design and Analysis for Clinical Trials (DACT) at https://www.innovatiostat.com/softeware.html, which is free for academic researchers. Computing codes are provided in the online supporting material and also available upon request.

2. Simon’s design and challenges in phase II oncology trials

2.1. Notations

Simon’s design is a two-stage design which includes four parameters . We propose several two-stage (the hybrid and the mid-point designs) and a three-stage design in this article, each of these will involve similar parameters. We introduce some notations to distinguish the designs and the associated parameters.

2.2. Simon’s design

Let ,

and

be as discussed above. Sample size is calculated assuming that the response rate is

. There are two stages in Simon’s design. In stage 1,

subjects will be enrolled. If no more than

responses are observed, then the trial would be stopped for futility. Otherwise, the trial proceeds to the second stage and enroll to a total of

subjects for the trial. The null hypothesis will be rejected if more than

responses are observed at the end of the trial. Let

denote the cumulative binomial distribution, and

the binomial probability mass function. Let

. Let

. This is the probability of not rejecting the null hypothesis with the response rate of

(Simon, Citation1989). The type I error is

. The type II error and power are

and

, respectively.

is the probability of early termination. The expected sample size is

. A Simon’s design includes the quadruplet (

,

,

,

), such that under

, the quadruplet satisfies the three considerations/constraints: i) The one-sided type I error should be controlled at a target level

; ii) the type II error be controlled at

. iii) minimizing the sample size when the drug has no sufficient activity. We refer to these as Simon’s considerations. All methods for sample size calculation for clinical trials share the first two constraints/considerations. The third one aims to select the smallest

under

(among all possible quadruplets) for the minimax design, or the smallest

for the optimal design Simon (Citation1989). This is a distinctive feature for Simon’s design, and likely contributed to the huge popularity of Simon’s design. Let

denote the quadruplet (

,

,

,

) under

.

2.3. Group sequential design and Simon’s design

Let ,

be random samples from a binary distribution with

, and

. The parameter of interest is

. Let

. Let

be the standard error for

.

is the estimated Fisher’s information time. Then

is approximately

distributed and will be the Wald statistics from the observations

.

Let the Fisher’s information times be ,

, where

is the sample size at the

-th stage analysis. Let

be the information fractions, where

is the total information time for the trial. Let

,

,

. Let

be the number of observed responses at

,

. In Simon’s design the trial is terminated for futility at

if

is observed, and the null hypothesis of

is rejected if

is observed at

. Let

be the estimated Fisher’s information time at

. Let

. Then

is the same event as

, and

is the same event as

. i.e., Simon’s design is a GSD, such that the trial is terminated for futility if

is observed, and the null hypothesis rejected if

is observed. GSD are commonly designed by deriving critical boundaries such as O’Brien-Fleming boundary O’Brien and Fleming (Citation1979), the Pocock (Citation1977), or

- spending Lan and DeMets (Citation1983) at planned information fractions to control type I error, while the boundaries

and

in Simon’s design are derived from (

,

,

,

) and

.

and

properly control type I error because (

,

,

,

) does.

Pocock’s boundaries satisfy , and O’Brien-Fleming boundaries satisfy

. The motivation for Simon’s design is different from both Pocock’s and O’Brien-Fleming boundaries. Hence, the boundaries are naturally different, with

in Simon’s design.

2.4. Challenges in phase II oncology trials and the need for SSR

A fixed sample design, including Simon’s design, provides adequate power only when the true response rate is no less than the assumed response rate . The power will be less than

if the true response rate is less than

. In practice, a range of response rates can be clinically beneficial. Besides

, prior clinical evidence, or pathological analysis and mode of action may suggest that some optimistic response rate

may be possible. Any assumed response rate is reasonable if it is between

and

. A flexible design is such that it targets some response rate

between

and

, and with a sample size smaller than

, but allows for sample size increase to that similar to

, if the observed response rate is

at the interim analysis, with

. We have identified that Simon’s design is a special type of group sequential design. Hence, it is natural to utilize and combine available methods in ASD with Simon’s design.

3. Adaptive sequential design with binding futility termination boundary

An ASD is the combination of a group sequential design (GSD) with adaptive features such as sample size modification. The first step in an ASD is to select a GSD. Let be the efficacy parameter, larger values of

indicates better efficacy. Let the null hypothesis

be that

, and the one-sided alternative hypothesis

be that

. In a GSD,

(including interim and the final) analyses will be conducted at information times

. Let

be the Wald statistics at the

-th interim analysis. Critical boundaries

and futility boundaries

may be selected, such that the null hypothesis

is rejected if

is observed at any

, and the trial declared futile and terminated if the

is observed for any

. The

’s and

’s are chosen such that the one-sided type I error is controlled at level

. The futility boundaries

can be chosen to be either binding or non-binding. If the Wald statistics for single-arm trials can be constructed, then the usual GSD can be applied to single-arm trials, in a completely parallel manner.

3.1. Applying sample size re-estimation to Simon’s design

3.1.1. The SSR algorithm

SSR procedure can be used to increase sample size when the observed effect size is smaller than the assumed. The SSR procedure in a single arm oncology trial can be completely parallel to that in ASD (e.g., Cui et al. (Citation1999); Müller and Schäfer (Citation2001); Gao et al. (Citation2008) for two arm comparison. Let be the MLE of

at the interim analysis with a sample size of

. Then the conditional power of rejecting the null hypothesis at the end of the trial with planned sample size

Gao et al. (Citation2008) is

. And the estimated conditional power is

, where

. If

, then the sample size may be increased to

, such that

, where

.

can be solved Gao et al. (Citation2008) as:

Let . Let

be a pre-determined maximum feasible sample size. Then the new sample size may be chosen as

. Let

. The final critical boundary at

will be (see Gao et al. (Citation2008))

, where

Let be the number of responses observed at the final analysis. Let

be the smallest integer such that

Then the null hypothesis is rejected if .

3.1.2. SSR using continuity correction

Due to the discreteness of the binary distribution, the above procedure for calculating may not exactly control the type I error and a continuity correction (e.g., Feller (Citation1945); Devore (Citation1995)) may need to be used. Let

0.5,

be the continuity corrections for

. Let the continuity corrected binding futility boundary be

, and

correspond to critical boundaries

. Then

(instead of

) can be used to calculate

in the SSR, and

can be obtained in the same way as for

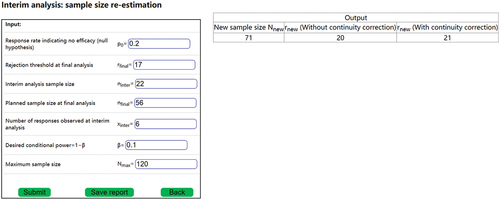

. demonstrates how to conduct interim analysis using DACT. DACT output includes

,

and

.

The interim analysis shown in can accommodate both the two-stage and the three-stage design (see section 3.2.4 on expansions). The sample size re-estimation is conducted at for a two-stage design and at

for a three-stage design. Therefore, for a two-stage design,

,

,

, and

. For a three-stage design,

,

,

, and

. For the input in ,

. However, if

is changed to 7, then

.

The corrections

changes the calculations of

and

, which superficially changes the sequential design, but they do not actually change the original two-stage design, because it is discrete. The continuity correction only affects the calculation of

in the SSR.

is calculated using formulas (1) and (2), with

being replaced by

. Then,

is identified using formula (3) with

and

being replaced by

and

, respectively. So

is actually not used in this calculation and its definition is actually not necessary. But not defining

may invite questions which will also need explanations and keeping the definition of

may be a simpler approach. Per formula (1) and (2),

is affected by

,

(which are determined by

,

),

(which is affected by the number of observed responses),

. None of these parameters are associated with the original design (i.e., optimal, minimax, or the expanded design [see section 3.1] such as the average, the hybrid. The midpoint, or the three-stage design).

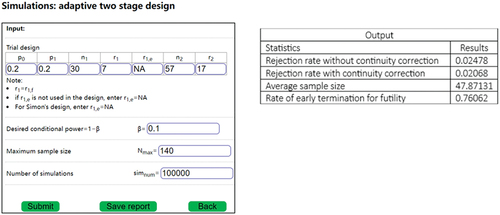

The use of may not always be necessary. The necessity may be checked with simulations. If the simulated type I error using

is less than

, the continuity correction would not be necessary. The simulations can be conducted using the DACT software, as illustrated in .

In , the type I error without continuity correction is <0.025, hence there is no need for the continuity correction.

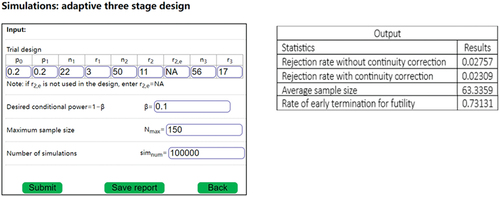

In , the type I error without continuity correction is >0.025, while the type I error is <0.025, hence continuity correction is necessary.

3.2. Expanding Simon’s design

3.2.1. Motivation for expanding Simon’s design

Combining an SSR procedure with Simon’s design, as discussed in the previous section, will add flexibility to Simon’s design and increase the power when the true response rate is lower than the assumed one. However, simulations () show that simply adding the SSR procedure to may still fall short of providing desired power when

is close to

. One of the reasons is that

may be too small and that the related

can be too large. To design a trial that can provide power of about

for all

between

and

, changes must be made on Simon’s design. We seek to expand Simon’s design such that the expanded design is flexible and can satisfy Simon’s considerations for all

between

and

. Let

,

,

and

be defined as in . Because both

and

are among possible response rates, the trial designers could choose either

or

, and both are reasonable designs. Hence

,

and

and

are all acceptable under Simon’s third consideration, and can be considered to be benchmarks, such that the

or

for the new design is permissible if it is no larger than

or

, respectively. Hence, the expansions will be permissible if

or

for the new designs are permissible. Trial designs with smaller

or

(i.e., closer to

or

, respectively) are preferable. Our motivation is to identify designs with permissible

and

, and that they are as close to

or

as possible. Let

be the probability of early futility termination under

for a design. The power of combining SSR to a design will not exceed

, no matter the method is the combination test/conditional error function-based SSR (e.g., Englert and Kieser (Citation2012); Englert and S (Citation2013)), or an ASD method (such as proposed in this article). In order to achieve a power of

when

is close to

,

must be required for the design. For

,

is automatically satisfied. But for

, it is possible that

. When this happens, simply applying SSR to

will not provide desired power when

is close to

. Applying SSR to

won’t be necessary since the design already has enough power for all response rate no less than

. A suitable design would likely have a sample size between those for

and

. We propose several expansion options, which will be different compromises between

and

. Each will have a planned sample size smaller than

, but with SSR, can provide more power than that of

combined with SSR. Each of the expansions will satisfy Simon’s considerations, and that

. The last condition means that the sample sizes will need to be larger than that for

. The expansions include a hybrid design, a midpoint design, and a three-stage design. However, before these expansions, we propose an average design, which is an augmentation of Simon’s optimal and minimax designs, but not an expansion. In Simon’s design,

is a futility cutoff and will be denoted as

. While

is an efficacy cutoff and will be denoted as

. In our expansions, we’ll denote futility cutoffs

as

, and efficacy cutoffs

as

.

Table 1. Notations.

3.2.2. The average design – an augmentation

Jung et al. (Citation2004) noted that “the two Simon’s designs may result in highly divergent sample size requirements”. Indeed, for example, if ,

,

,

, then

,

for the minimax design and

,

for optimal design. Such divergence could present challenges for the trial designers, and also suggest that some other designs may also be reasonable choices. Jung et al. (Citation2004) proposed the admissible designs. We propose an additional design option, the average design, to balance the desire to minimize the

(the optimal design) and that to minimize the

(the minimax design). The optimal and the minimax designs will be derived first. Let

,

be the smallest integers larger than the average of

’s and

’s from the optimal and minimax designs, respectively. Then search for

’s that satisfy

, and

.

can be chosen such that

is the smallest integer satisfying the requirement on type I and type II errors. Design examples are given in . The

and

for the average design are smaller than

, and

, respectively. Jung’s admissible designs aim to achieve the minimum of weighted average of

(with weight

) and

(with weight

), while the average design balances the desire for the smallest

and the smallest

. Hence, the motivation of the average design is similar to Jung’s admissible design with

. However, they are obtained through different search processes, and thus are not expected to be exactly the same. The average design offers an additional option for trial designers when the minimax design and the optimal design are very different. Three admissible designs from Jung et al. (Citation2004, ) are presented in for comparison with the average design.

Table 2. Comparing the average design and Jung’s admissible design.

In , in the case of , the average design is different form Jung’s admissible design. In the case of

, there are two Jung’s admissible designs, and one of them is the same as the average design.

3.2.3. The modified Simon’s design

Because of the necessity of achieving , it is natural to think about modifying Simon’s design, by adding this constraint to Simon’s considerations in the search of the quadruplet

for the two-stage design, such that the following will be satisfied: i) the type I error satisfies

. ii) The power under

satisfies

. iii)

. Similar to Simon’s design, among all the quadruplets that satisfy these constraints, the one with the smallest

will be selected as the minimax design, and the one with the minimum

will be the optimal design. However, our investigation shows that the requirement of

frequently led to the reduction of

, and the difference between the modified design and the original Simon’s design is often minor. Consequently, the modified design combined with SSR may not meaningfully improve the power of the original Simon’s design

combined with SSR if the true response rate is close to

. Hence, the modified design will not be further discussed.

3.2.4. The expansions

Our investigation further shows that besides the requirement that ,

(at which the SSR will be performed) can have significant impact on the power of a design combined with SSR. In order for a two-stage design plus SSR to have substantial power increase over Simon’s original design

plus SSR,

will need to be larger than

. We explore several possibilities for

, each will satisfy

.

3.2.4.1. Two-stage designs

The hybrid design

The design automatically satisfies the condition

. Hence,

is an obvious candidate for

in a two-stage design expansion. The next step is to search for

and

to satisfy the type I error and power constraints under the alternative hypothesis of

. This design aims to address both of the possibilities of

and

, and it uses parameters from

(i.e.,

). Therefore, it is named the hybrid design. With this design,

. It has the same

and

as with

.

will be smaller than

(so

is permissible and

). Hence,

, and

will be permissible as well. The process of identifying

,

,

and

is detailed in the online supplemental material. Let

. With this sample size and response rate of

, a fixed sample size trial will have about

power and type I error

. The difference between

and

could be large enough such that

. For example, suppose that

,

, and

. Then

from

will be 100, which is greater than

. In such a situation, it is reasonable to have an additional hypothesis test at

, and such that the null hypothesis will be rejected if the number of responses exceeds some efficacy boundary

at

. Details are provided in the online supplemental material. There will be many candidates for

, and it is natural to select the smallest

. However, as discussed, it is possible that

, and selecting the smallest

could result in selecting

in such a scenario. A small

means that the final analysis will happen soon after the stage 1 interim analysis, which may not be practical. Therefore, the design includes an option such that the user can set a minimum value for

, which will take the enrollment into consideration. The selection of

can be optimized by evaluating the operating characteristics of hybrid +SSR with simulations in DACT.

The midpoint design

Since , it will be permissible. However, because of the choices for

and

,

could be very close to

and much larger than

. It could be desirable to reduce

. This can be improved with a mid-point design. This is also a two-stage design. In this design,

and

will be derived first, then

, where

is determined by the user and can be optimized with simulations (provided in the DACT software) Then,

,

,

will be searched. The details are provided in the online supplemental material. Similar to the hybrid design, the midpoint design may include an efficacy threshold

, and a minimum for

.

3.2.4.2. Three stage design

A three-stage design, which includes two interim futility analyses, is more effective at reducing than the mid-point design (see and for simulation results).

No interim hypothesis testing

In this design, subjects will be enrolled in stage 1, if no more than

responses are observed, then the trial would be stopped for futility. Otherwise, the trial proceeds to the second stage and enroll to a total of

subjects. If no more than

responses are observed, then the trial would be stopped for futility. Otherwise, the trial proceeds to the third stage and enroll to a total of

subjects. The null hypothesis of

will be rejected if more than

responses are observed at the end of stage 3. The details for searching

are provided in the online supplemental material.

With interim hypothesis testing

Let ,

be chosen as above. Similar to the situation in the hybrid design, it is possible that

. A hypothesis testing can be added at

, such that the null hypothesis is rejected, and the trial can be terminated if the number of responses exceeds some threshold

. The details for searching

are provided in the online supplemental material.

3.3. Inference

After the trial, with or without an SSR, the median unbiased point estimate and two-sided exact confidence interval for can be estimated per Gao, Liu, Mehta (Gao et al. Citation2013). Then, the estimates for

can be obtained as that for

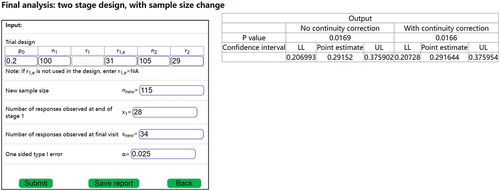

. The inference can be conducted using DACT. is an example of final analysis for an adaptive two-stage design in which the sample size was changed after the interim analysis.

In , ,

,

,

,

. The number of observed responses at the interim analysis (at

) is

. The value of

is not needed for the final analysis, because the trial continued after the interim analysis, and thus the final analysis does not need the value of

(only

is needed). After the interim analysis, the sample size was changed to

. The final observed number of responses is

. We note that the final analysis only involves

, but not the adjusted

or

. Assuming that simulations had been conducted at the trial design stage (e.g. and ) to determine whether continuity correction is necessary. If continuity correction was not necessary, then the output without continuity will be used as the final inference, with the estimated response rate of

, the 95% confidence interval of (0.206993, 0.375902), and a p-value of 0.0169. Otherwise, using inference with continuity correction, the estimated response rate of

, the 95% confidence interval of (0.206728, 0.375954), and a p-value of 0.0166.

4. Design examples and simulations

4.1. Design examples

Suppose that a single-arm phase II trial is being designed. Suppose that ,

,

. There are several possible designs: i)

: Simon’s design with

; ii)

: Simon’s design with

; iii) The hybrid design and the midpoint design (

= 0.5) with

,

. Minimum value for

is set to be 5 for purpose of discussion. iv) the three-stage design.

is used for discussion. Investigators can use any

, as long as

. Note that the search for

and

is such that

are associated with

and

as actual probabilities of

-spending, not nominal

-spending (the sum of nominal

-spending will be greater than

). So the condition of

is not a conservative requirement. In practice, larger

may be selected if the investigators believe (e.g., based on literature and/or clinical evidence) that the response rate is more likely to be closer to

, smaller

may be selected otherwise. The impact of the

-spending can be investigated with simulations (with SSR) to optimize power for the interested range of the response rate,

. and present design examples.

indicates type I error, and PW denotes power.

Table 3. Simon’s two-stage design with augmentation (fixed sample).

Table 4. The expanded designs (fixed sample).

4.2. Simulations

If the true response rate is , then both the Simon’s design

, with

, and all of the expanded designs with

, and

, will have power less than

. We conducted the simulations to check if combining with SSR can improve the power to be close to

for each of the designs. We use a hypothetical maximum sample size of 140. This is close to

. The critical boundaries without continuity correction are used when the type I error is adequately controlled without the correction. Otherwise, the critical boundaries with continuity correction are used.

Simulations were conducted to investigate the operating characteristics of each design with SSR and the results are presented in . The algorithm for the SSR is described in section 3.1.1. The results include: type I error () with and without continuity correction (

and

), power under

and

, with or without continuity correction (

and

), expected sample size under both

and

(

and

, as well as the probability of early termination under

(

. All the simulations are supported by the DACT software. DACT provides all the outputs summarized in .

Table 5. Simulation on SSR with Simon’s two-stage design.

Table 6. Simulation SSR with the expanded two-stage designs.

All type I errors without continuity correction () were less than 0.025. Hence, continuity correction was not necessary for the scenarios in .

All type I error without continuity correction () for the hybrid design exceeded 0.025, while the continuity corrected type I error (

were less than 0.025. Hence, continuity correction was necessary for hybrid design the scenarios in .

All type I error without continuity () for the midpoint design were less than 0.025. Hence, continuity correction was not necessary for the hybrid design in the scenarios in .

The type I error without continuity correction () for the optimal design exceeded 0.025, while the continuity corrected type I error (

for the optimal were less than 0.025. Hence, continuity correction was necessary for optimal design in the scenarios in .

The type I error without continuity correction () for the minimax and average designs were less than 0.025. Hence, continuity correction was not necessary for the minimax and average designs in the scenarios in . , and show that the three-stage design has the smallest

.

Table 7. Simulation SSR with the expanded three-stage designs.

If the true response rate is ,

The power of

+SSR () under

were only 0.727 for the optimal design, and even smaller for the minimax design + SSR at 0.668. Confirming that simply adding SSR to

could be insufficient to provide desirable power for all

between

and

. Larger sample sizes

and

would be necessary to achieve larger power.

The purpose for introducing the expansions was that the expansions in combination with SSR will have more power than

combined with SSR. It is noted that: i) The sample sizes (

and

) for all the expanded designs were larger than that for

and were permissible. Interim analysis with larger sample sizes (at

have more information time and smaller variation, and the interim analysis results are more accurate. Hence, the SSR with the expanded designs were more reliable than the SSR with

.

Although the sample size of the three-stage designs was larger than that of

, the simulated

for the three-stage design combined with SSR () were very close to that of

combined with SSR (). i.e., the three-stage design combined with SSR performs well with Simon’s considerations.

for Simon’s design

, while

for all the expanded designs.

The simulated power does not exceed

for all designs.

5. Discussions

Lin and Shih (Citation2004) facilitate hypothesis tests for two possible response rates, an optimistic and a skeptical target rate. From this perspective, Lin and Shih (Citation2004) is more flexible than either Simon’s design Simon (Citation1989) or that of Jung et al. (Citation2004), since both of them conducts hypothesis testing on only one target response rate. However, in general, SSR (either conditional error function based, such as that by Englert and Kieser (Citation2012); Englert and S (Citation2013); or adaptive sequential methods in our proposal) allows for a range of possible response rates and is thus more flexible than Lin and Shih (Citation2004).

Simon’s designs only use a binomial endpoint, which may be considered to be a limitation. However, a response rate (such as ORR) may be more intuitive than other endpoints. Such intuitiveness may be the reason for the popularity of Simon’s design. On the other hand, expanding Simon’s design using other endpoints (e.g., progression-free survival) could offer more options for trial investigators conducting phase II oncology trials. However, that is beyond the scope of this article.

We propose several expansions for Simon’s design. They are intended to be used together with SSR, such that the planned sample size is sufficient to provide power for when the response rate is , and with the flexibility to increase sample size if the observed response rate is closer to

. The simulations show that all of expanded design combined with SSR have more desirable power than Simon’s design combined with SSR, as intended. The three-stage design is statistically more efficient than the two-stage expansions because it has similar simulated power but a smaller

than the other expansions. Three-stage design has been previously proposed (Chen Citation1997; Ensign et al. Citation1994). It is recognized that a three-stage design is operationally more complicated than a two-stage design. We note that most clinical trials involve at most one interim analysis. However, when feasible, statistical optimality could be preferable despite the added operational complexity. For example, some single-arm phase II oncology trials do conduct multiple interim analysis (e.g., the Zuma-1 trial protocol (Citation2015a); Zuma-2 trial protocol Citation(2015b)) . If a three-stage design is not feasible, either the hybrid or the midpoint design may be used.

In practice, the operating characteristics of any of the proposed designs can be influenced by several factors, such as ,

,

.,

and

(for two-stage expanded designs),

and

(for three-stage expanded designs). For the midpoint design, the OCs are also affected by the choices of

. The OCs of each design under consideration should be thoroughly investigated with simulations. Designs that optimize the considerations of budget, patient enrollment, and feasibility can then be selected. All these simulations can be conducted with the DACT software. In general, we recommend the three-stage design because of its statistical efficiency.

Supplemental Material

Download MS Word (55.5 KB)Disclosure statement

No potential conflict of interest was reported by the author(s).

Supplemental data

Supplemental data for this article can be accessed online at https://doi.org/10.1080/10543406.2024.2341673.

Additional information

Funding

References

- Bauer, P., and K. Kohne. 1994. Evaluation of experiments with adaptive interim analyses. Biometrics Bulletin 50 (4):1029–1041. doi:10.2307/2533441.

- Chang, M. N., T. M. Therneau, H. S. Wieand, and S. S. Cha. 1987, Dec. Designs for group sequential phase II clinical trials. Biometrics Bulletin 43(4):865–874. doi:10.2307/2531540.

- Chen, T. T. 1997, Dec 15. Optimal three-stage designs for phase II cancer clinical trials. Statistics in Medicine 16 (23):2701–2711. doi:10.1002/(sici)1097-0258(19971215)16:23<2701:aid-sim704>3.0.co;2-1. PMID: 9421870.

- Chen, Y. H. J., D. L. DeMets, and K. K. G. Lan. 2004 Apr 15. Increasing the sample size when the unblinded interim result is promising. Statistics in Medicine 23(7):1023–1038. doi:10.1002/sim.1688.

- Cui, L., H. M. Hung, and S. J. Wang. 1999. Modification of sample size in group sequential clinical trials. Biometrics Bulletin 55 (3):853–857. doi:10.1111/j.0006-341X.1999.00853.x.

- Devore, J. L. 1995. Probability and statistics for engineering and the sciences. 4th ed. USA: Duxbury Press.

- Englert, S., and M. Kieser. 2012, September. Improving the flexibility and efficiency of phase II designs for oncology trials. Biometrics Bulletin 68(3):886–892. doi:10.1111/j.1541-0420.2011.01720.x.

- Englert, S., and M. K. S. 2013, Nov. Optimal adaptive two-stage designs for phase II cancer clinical trials. Biometrical Journal 55(6):955–968. doi:10.1002/bimj.201200220.

- Ensign, L. G., E. A. Gehan, D. S. Kamen, and P. F. Thall. 1994 Sep 15. An optimal three-stage design for phase II clinical trials. Statistics in Medicine 13(17):1727–1736. doi:10.1002/sim.4780131704.

- Feller, W. 1945. On the normal approximation to the binomial distribution. The Annals of Mathematical Statistics 16 (4):319–329. doi:10.1214/aoms/1177731058.

- Gao, P., L. Liu, and C. Mehta. 2013 Oct 15. Exact inference for adaptive group sequential designs. Statistics in Medicine 32(23):3991–4005. doi:10.1002/sim.5847.

- Gao, P., J. H. Ware, and C. Mehta. 2008. Sample size re-estimation for adaptive sequential designs. Journal of Biopharmaceutical Statistics 18 (6):1184–1196. doi:10.1080/10543400802369053.

- Jennison, C., and B. Turnbull. 2003. Mid-course sample size modification in clinical trials based on the observed treatment effect. Statistics in Medicine 22 (6):971–993. doi:10.1002/sim.1457.

- Jennison, C., and B. Turnbull. 2006, Mar. Adaptive and non-adaptive group sequential tests. Biometrika 93(1):1–21. doi:10.1093/biomet/93.1.1.

- Jung, S.-H., T. Lee, K. M. Kim, and S. L. George. 2004. Admissible two-stage designs for phase II cancer clinical trials. Statistics in Medicine 23 (4):561–569. doi:10.1002/sim.1600.

- Kunzmann, K., and M. Kieser. 2016. Optimal adaptive two-stage designs for single-arm trial with binary endpoint. arXiv:1605.00249 [stat.AP].

- Lan, K. K. G., and D. L. DeMets. 1983. Discrete sequential boundaries for clinical trials. Biometrika 70 (3):659–663. doi:10.2307/2336502.

- Lin, Y., and W. J. Shih. 2004, Jun. Adaptive two-stage designs for arm phase Ha cancer clinical trials. Biometrics Bulletin 60(2):482–490. doi:10.1111/j.0006-341X.2004.00193.x.

- Müller, H. H., and H. Schäfer. 2001. Adaptive group sequential designs for clinical trials: Combining the advantages of adaptive and of classical group sequential approaches. Biometrics. 57 (3):886–91.

- O’Brien, P. C., and T. R. Fleming. 1979. A multiple testing procedure for clinical trials. Biometrics Bulletin 35 (3):549–556. doi:10.2307/2530245.

- Pocock, S. J. 1977. Group sequential methods in the design and analysis of clinical trials. Biometrika 64 (2):191–199. doi:10.1093/biomet/64.2.191.

- Proschan, M. A., and S. A. Hunsberger. 1995, Dec. Designed extension of studies based on conditional power. Biometrics Bulletin 51(4):1315–1324. doi:10.2307/2533262.

- Simon, R. 1989. Optimal two-stage designs for phase II clinical trials. Controlled Clinical Trials 10 (1):1–10. doi:10.1016/0197-2456(89)90015-9.

- U.S. Food and Drug Administration. 2018, Dec. Oncology center of excellence/center for drug evaluation and research (CDER)/center for biologics evaluation and research (CBER). Clinical trial endpoints for the approval of cancer drugs and biologics. guidance for industry.

- U.S. Food and Drug Administration (CDER and CBER). 2019. Guidance for industry adaptive design clinical trials for drugs and biologics.

- Zuma-1 trial protocol, 2015. https://www.nejm.org/doi/suppl/10.1056/NEJMoa1707447/suppl_file/nejmoa1707447_protocol.pdf.

- Zuma-2 trial protocol, 2015. https://www.nejm.org/doi/suppl/10.1056/NEJMoa1914347/suppl_file/nejmoa1914347_protocol.pdf.